|

|

Sasha RushML Researcher. Cursor. NYC.srush.research@gmail.com [cv] |

I study post-training of AI systems for coding and related tasks. I'm interested in improving model reasoning for long-horizon tasks.

Bio

From 2016–2026, I was a Professor at Harvard and then Cornell. My group's research was recognized with an NSF CAREER Award and a Sloan Fellowship. My students have won paper awards at conferences for NLP, Hardware, and Visualization, and gone on to do really neat things. From 2019–2024, I also worked as a researcher at Hugging Face and helped on early open-source LLM projects. In 2024, I helped start the Conference on Language Modeling. I have a youtube channel and, somewhat regretfully, a twitter. One day I will blog more.

|

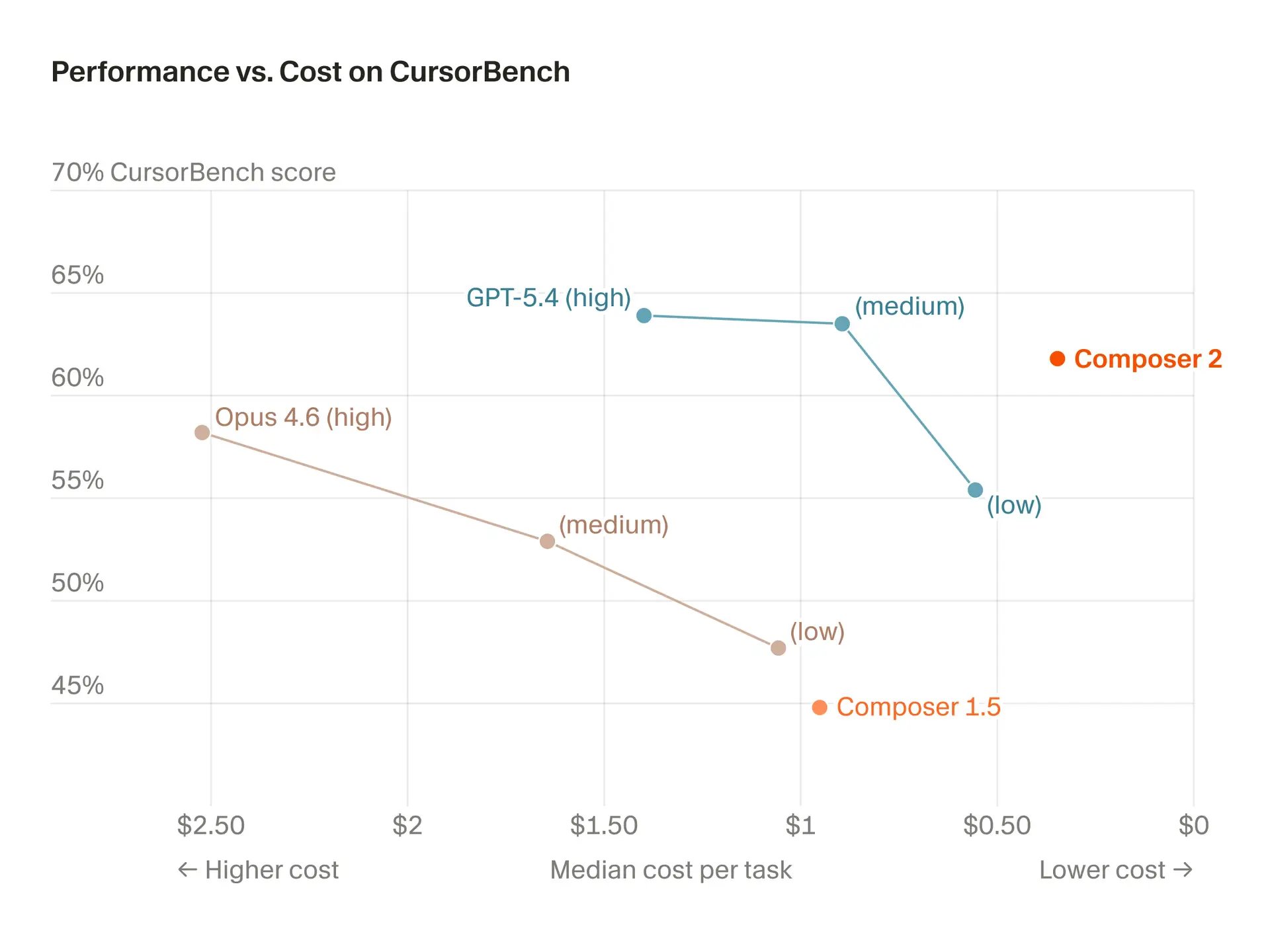

Composer 2

Cursor Team. Arxiv 2026 |

|

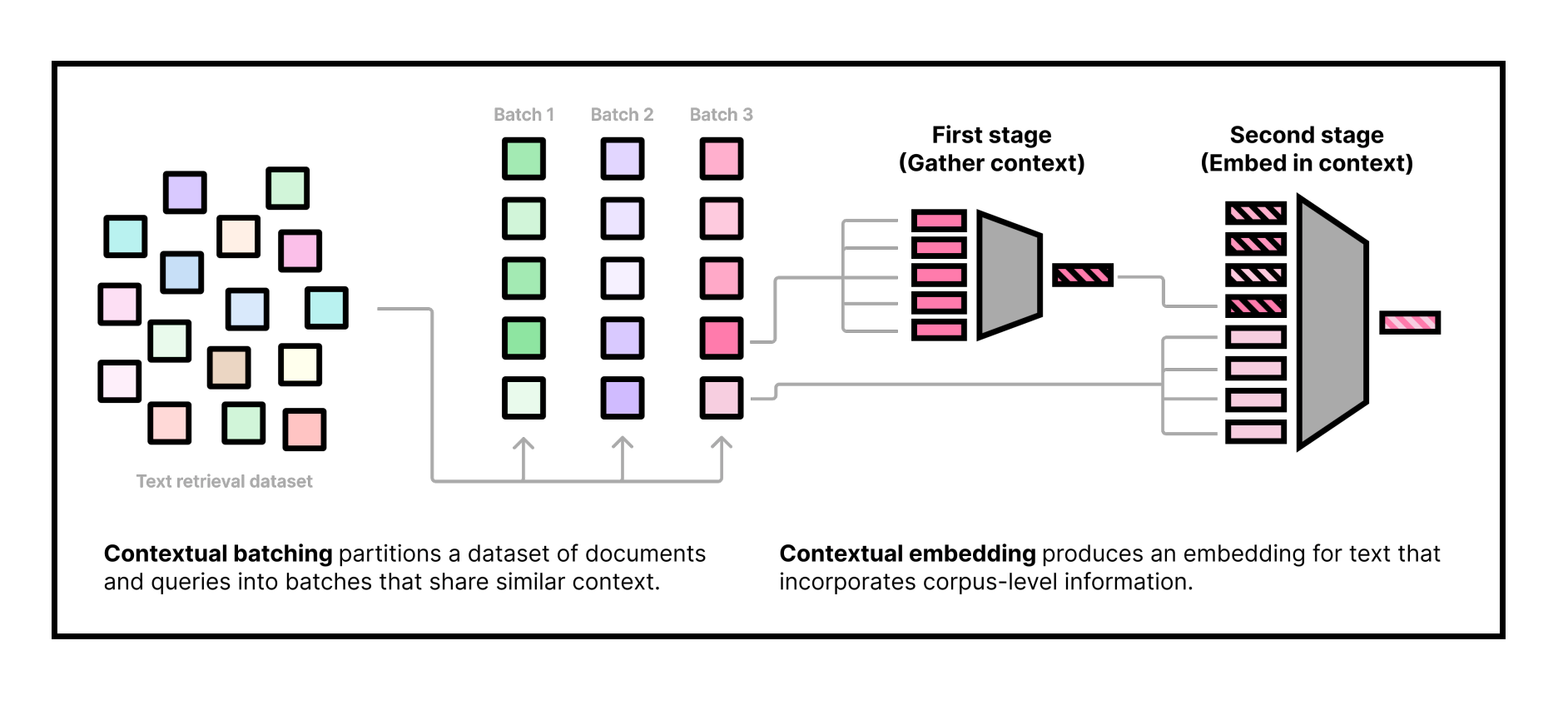

Contextual Document Embeddings

J. X. Morris, Alexander Rush. ICLR 2025 |

|

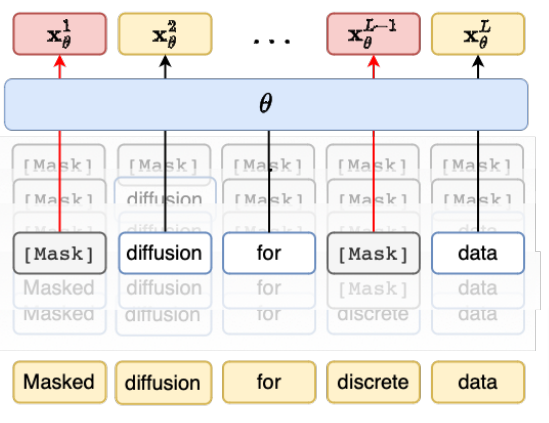

Simple and Effective Masked Diffusion Language Models

Subham Sekhar Sahoo, Marianne Arriola, Yair Schiff, Aaron Gokaslan, Edgar Marroquin, Justin T Chiu, Alexander Rush, Volodymyr Kuleshov. NeurIPS 2024 |

|

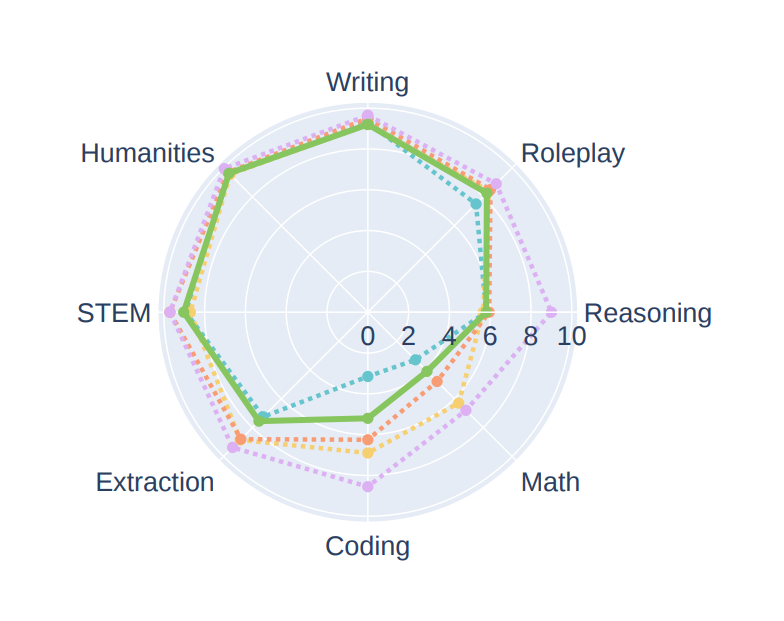

Zephyr: Direct Distillation of LM Alignment

Lewis Tunstall, Edward Beeching, Nathan Lambert, Nazneen Rajani, Kashif Rasul, Younes Belkada, Shengyi Huang, Leandro von Werra, Clémentine Fourrier, Nathan Habib, Nathan Sarrazin, Omar Sanseviero, Alexander M. Rush, Thomas Wolf. COLM 2024 |

|

Pretraining Without Attention

Junxiong Wang, Jing Nathan Yan, Albert Gu, Alexander M. Rush. EMNLP 2023 Findings |

|

Multitask prompted training enables zero-shot task generalization

Victor Sanh, et al.. ICLR 2022 |

|

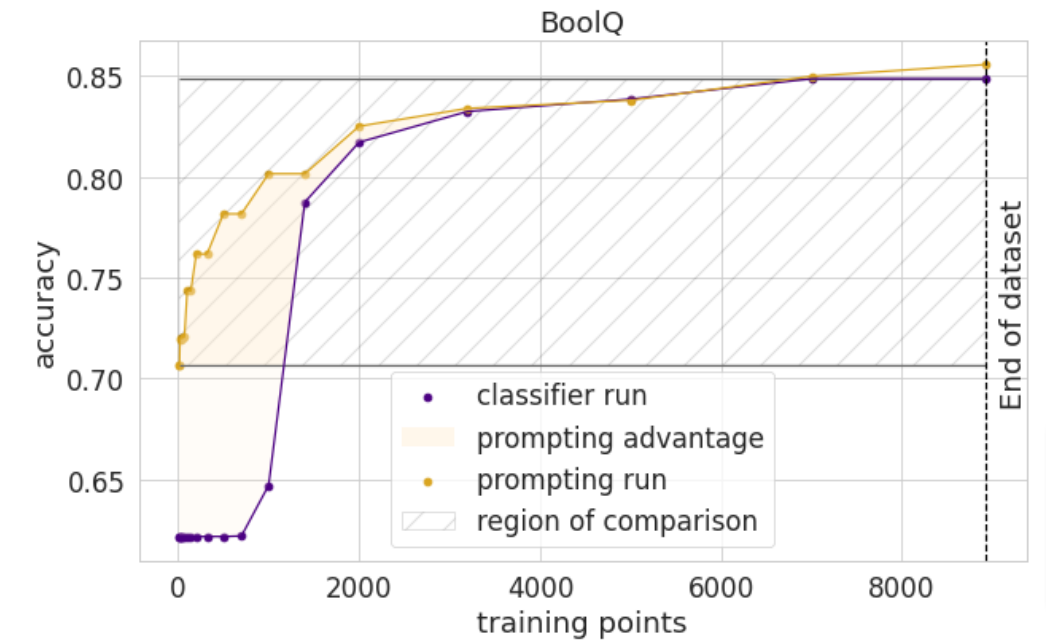

How many data points is a prompt worth?

Teven Le Scao, Alexander M. Rush. NAACL Short 2021 |

|

GPU-Puzzles

Alexander M. Rush. 2021 |

|

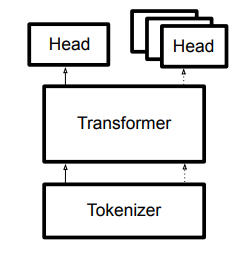

Transformers: State-of-the-art Natural Language Processing

Thomas Wolf et al. EMNLP Demos 2020 |

|

Compound Probabilistic Context-Free Grammars for Grammar Induction

Yoon Kim, Chris Dyer, Alexander M. Rush. ACL 2019 |

|

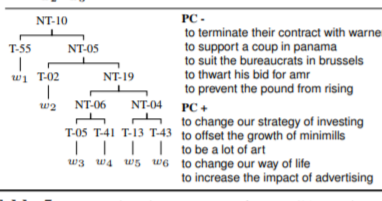

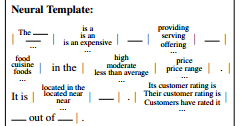

Learning Neural Templates for Text Generation

Sam Wiseman, Stuart M. Shieber, Alexander Rush. EMNLP 2018 |

|

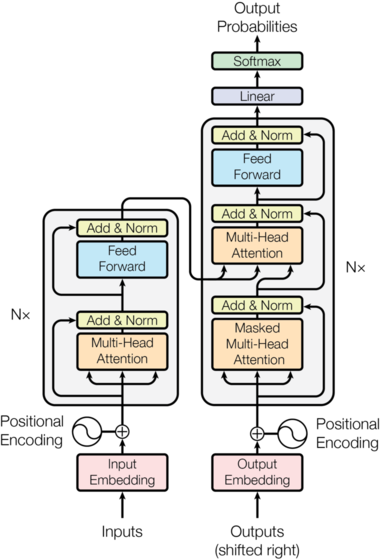

The Annotated Transformer

Alexander M. Rush. ACL NLP-OSS 2018 |

|

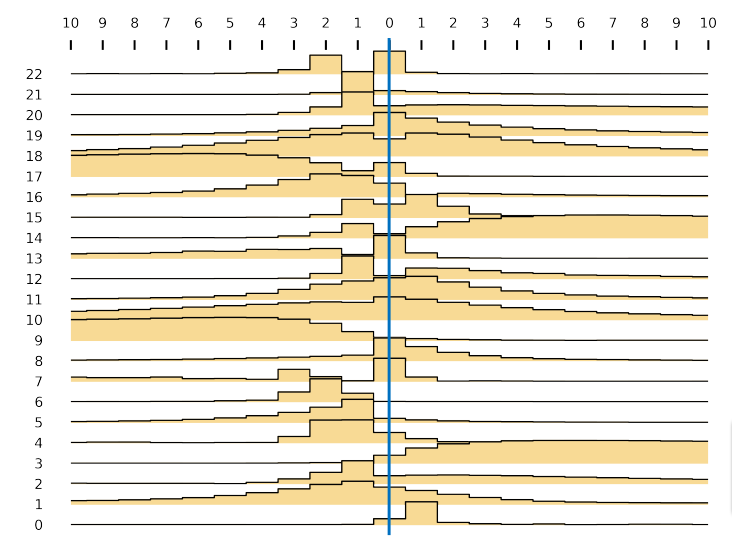

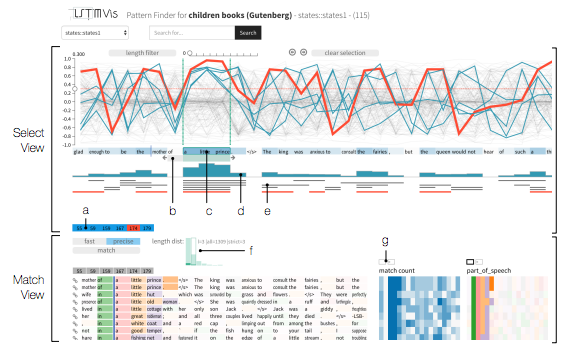

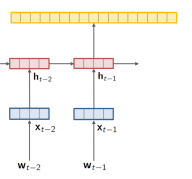

LSTMVis: A Tool for Visual Analysis of Hidden State Dynamics in Recurrent Neural Networks

Hendrik Strobelt, Sebastian Gehrmann, Hanspeter Pfister, and Alexander M. Rush. InfoVis 2017 |

|

OpenNMT: Open-Source Toolkit for Neural Machine Translation

Guillaume Klein, Yoon Kim, Yuntian Deng, Jean Senellart, Alexander M. Rush. ACL Demo 2017 |

|

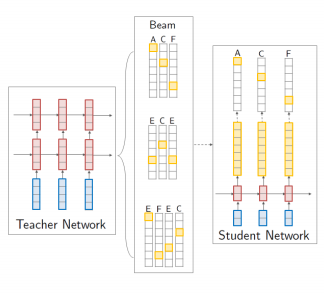

Sequence-Level Knowledge Distillation

Yoon Kim and Alexander M. Rush. EMNLP 2016 |

|

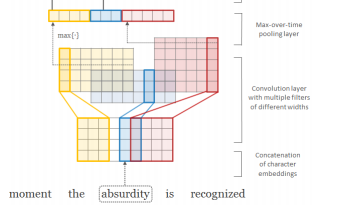

Character-Aware Neural Language Models

Yoon Kim, Yacine Jernite, David Sontag, and Alexander M. Rush. AAAI 2016 |

|

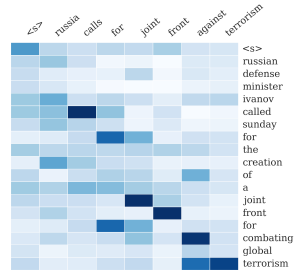

A Neural Attention Model for Abstractive Sentence Summarization

Alexander M. Rush, Sumit Chopra, and Jason Weston. EMNLP 2015. |